So, I finally finished a model.

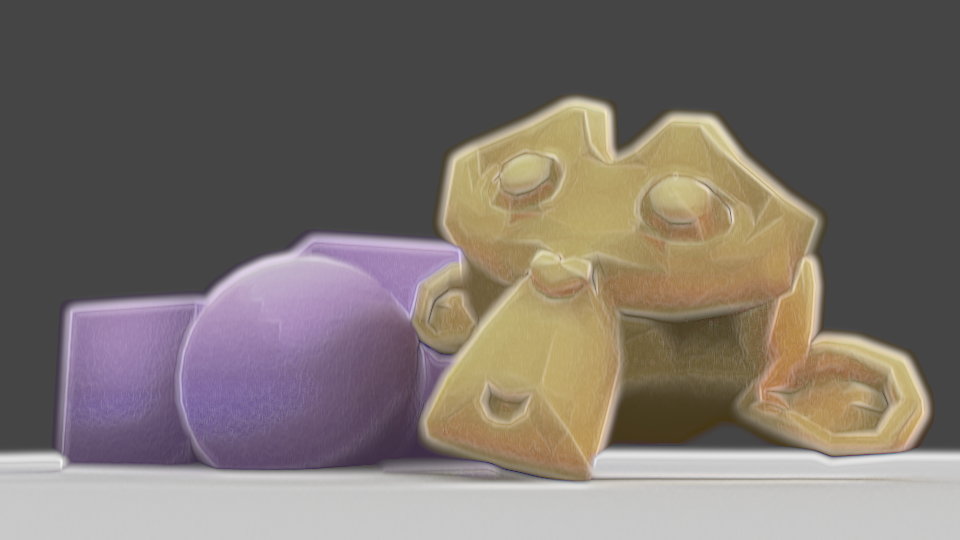

I wanted to make my Mariku model, but I decided to do a test model of another character first for practice and to see how my workflow would go. I decided to make one of Effie from Descender, before she was Queen Between.

I did two different lighting angles to test it. I’m fairly satisfied with my shader at this point….But not how I’m using it. I need to get more used to it, and adjustments I need to make to account for it. For example, the legs show through on the two images in several places where I forgot to turn on the clipping mask. I’d like a way to render them all as if 2d, if possible, all on one alpha layer, but I don’t know how I’d go about that with them as separate objects.

I also see I should change my linework. I was quite happy with the sketchy effect I was getting, but in practice, I don’t have the control I want; the nostrils, for example, get lines when I wouldn’t want them to, and it’s just…Inconvenient having to wait to see how it’ll look. I want as close to real time feedback as I can get.

I also need to work out the rigging. I used a proper armature this time, and then used the Data Transfer modifier to transfer the weights onto the clothes. She lacks her jacket in the render because I couldn’t get it to copy them and didn’t want to have to stop and rig that manually. I wanted to use the Mesh Deform modifier, but it was finicky and unreliable on this model, and having to unbind and rebind everything every time I changed the cage would be a pain. I also haven’t found a solution for unexpected behaviour triggered by having mesh deform and armatures at the same time.

I also just need to get better. The mesh quality is just not good enough. It doesn’t hold up to close ups and the anatomy isn’t good enough. Especially the legs. I’m not good at them.

Plus, I need to work out a better way to do the colours. I mixed them individually where needed, but because each colour has a set of values to go with it, it’s inconvenient. It’s just awkward. I could use two masks, one for total main colour and one for total shadow, but I worry it would damage the watercolour effect I’m going for, being too perfect and clean, whereas with multiple colours, the masks can be modified, such as adding edge soak at the borders, etc.

Lastly, I need to change how I’ll alter shading. The shader just won’t perform well enough to modify it in realtime with vertex paint as I’d wanted to. My experience of Blender’s texture painting makes me think it probably won’t handle using the texture-based method in a real scene, either. Normal editing is a nuisance, though. I’m uncertain what to do about it.

Still, I want to work something out. I’m making progress. I just wish I didn’t feel like I was constantly at the “If it was just a bit better…” Stage.